Services Won't Become Software

There's a growing consensus in venture right now that AI is transforming services businesses into software businesses, so much so that services companies are now considered venture-backable in ways they never were before. Several prominent VC firms framed it as a $4.6 trillion "service as software" opportunity a few years ago. General Catalyst committed $1.5 billion to acquiring and AI-enabling services companies across legal, IT, accounting, and more. Thrive launched a $1B+ vehicle to acquire and AI-enable services businesses, with OpenAI subsequently taking an ownership stake and embedding engineers directly into their portfolio companies.

The pitch is simple: services is a $16 trillion global market. Software is $1 trillion. If AI can bring software-like margins to services delivery, the upside is enormous. Software companies typically operate at 70-85% gross margins with deeply embedded, recurring customer relationships. Professional services firms run at 30-40% margins on a good day, often with project-based or episodic revenue. If AI can close even a fraction of that gap across a market that's 16x larger, the value creation is massive (and in some cases, it's already working).

It's clear the opportunity is real. But the discourse has gotten ahead of itself. What's actually happening is more nuanced than "services become software." AI is making services businesses better services businesses. The margins improve. The leverage per person increases. But they don't magically become software companies. Even as the tech takes on more of the execution layer and the ratio of people to output improves with each generation of model, the underlying dynamics of services businesses persist. The clients aren't just paying for output. They're paying for credentials, brand equity, institutional trust, and someone to own the outcome - and the liability that comes with it.

And that's fine. Because the markets are so much bigger that you don't need software margins to build massive outcomes.

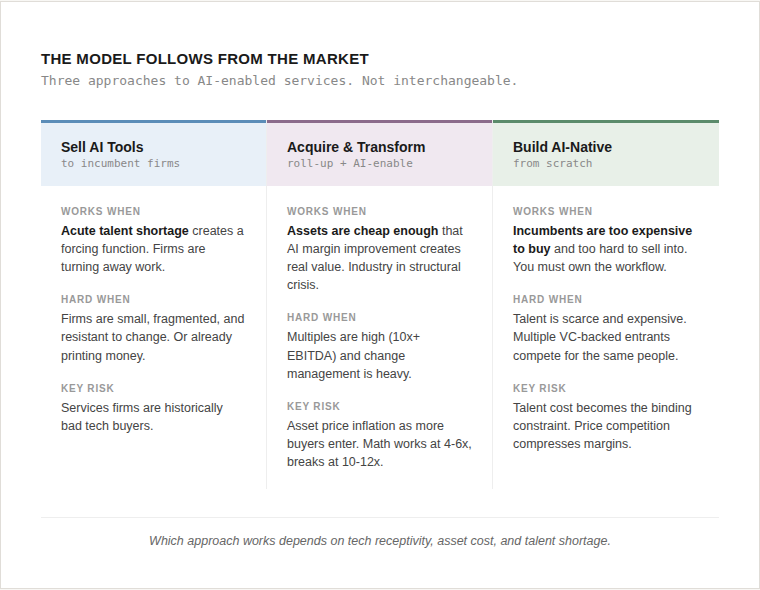

So far, I've observed three models emerging to capture this opportunity: selling AI tools to incumbent services firms, building AI-native services firms from scratch, and acquiring and AI-enabling them (roll-ups). Each has merit, but each carries distinct risks that investors need to underwrite carefully. Before getting into which model fits where, it's worth pressure-testing the opportunity itself. Here are the risks I think investors need to be considering:

- Not all of the TAM surplus is addressable.

- Not all margin expansion is durable.

- Growth still depends on people.

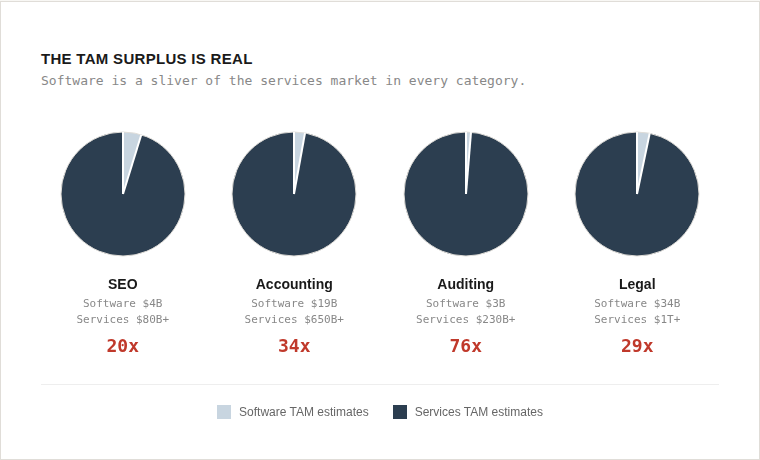

The TAM Surplus Is Real

In almost every professional services category, the software market is a fraction of the services market. This is well known, documented, and understood.

When you invest in software companies, you're underwriting to the software TAM. When you invest in AI-enabled services businesses, you're underwriting to the labor market, and the labor market is almost always dramatically larger.

But equating TAM surplus to addressable opportunity is one of the most common mistakes in the space. Understanding which parts of that surplus are truly addressable by AI, and which are structurally durable, is the more important question.

But Not All of the TAM is Addressable

Here's what gets lost in the "services become software" narrative: a meaningful share of professional services spend was never about output in the first place.

As a former paralegal and consultant, I can tell you that companies hire Big Four firms not because the audit itself is uniquely valuable, but because 'we followed expert guidance' is a defensible position if something goes wrong. They hire more expensive external counsel that regulators know and trust. They bring in consultants to independently recommend layoffs so the decision isn't pinned on internal leadership. These might appear to be inefficiencies, but they're features baked into how professional services actually work.

And it's not just about liability. It’s about credibility, external validation, and institutional trust. Companies pay advisory and consulting firms for benchmarking and peer intelligence, not because the data is impossible to find, but because having an independent third party contextualize what competitors are doing carries weight that internal analysis doesn't. A CFO presenting a compensation restructuring has a much easier time when they can say "according to McLagan's market data" than when they say "according to our internal analysis." The premium isn't for the information. It's for the credibility of the source.

If AI can do the analytical work, it can't do the blame-absorbing work. A board can't point to an AI model and say "we relied on expert guidance." So the question becomes: how much of the services TAM is actually about output quality, and how much is about liability transfer, political cover, and credentialing? The portion that's truly about output is addressable by AI. The portion that's about CYA may be more durable than it looks, but it also means the addressable services market for AI-enabled businesses may be meaningfully smaller than the headline numbers suggest. That cuts both ways: it's a ceiling on AI-native disruption, but it's also a differentiator for services businesses that own the client relationship and the liability (if you haven't yet, read Nihar's piece on Will Vertical AI Survive?).

Not All Margin Expansion is Durable

Even within the addressable TAM, margin expansion may prove partially transitory. As competitors adopt the same AI capabilities, services will re-commoditize on price. And as clients realize AI is doing work they previously paid junior staff for, they'll demand those savings be passed through. The margin compression comes from both sides.

The durable margin lives in the premium and liability layer that sits on top of the AI-automated output, not in the automation itself. Consider two AI-enabled accounting firms. One automates tax preparation and passes cost savings to clients, achieving 60% gross margins. The other automates the same work but also employs CPAs who sign returns, carry E&O insurance, and own the client relationship. Its margins are lower, maybe 45%, because it bears the cost of that liability layer. But those margins are far stickier. Clients aren't just paying for output that they could get cheaper elsewhere. They're paying for someone to stand behind the work. The first firm is vulnerable to any competitor with access to the same models. The second has a structural moat in the form of professional trust and accountability that AI alone can't replicate.

And the competitive pressure isn't just coming from other startups. Anthropic recently launched Claude for Excel with pre-built agent skills for financial analysts, accountants, and wealth managers for DCF modeling, comparable company analysis, and due diligence data packs, alongside connectors to S&P Capital IQ, Moody's, and PitchBook. And earlier this week, OpenAI announced multi-year "Frontier Alliance" partnerships with Accenture, BCG, Capgemini, and McKinsey to deploy agents directly into corporate workflows. The foundation model companies aren't waiting for startups to build the services layer on top of them. They're going after the workflows directly. If the next generation of models can autonomously complete an audit workpaper or draft a legal brief at production quality, "AI-enabled services" becomes a transitional state, not an endpoint.

We've launched Claude for Financial Services.

— Anthropic (@AnthropicAI) July 17, 2025

Claude now integrates with leading data platforms and industry providers for real-time access to comprehensive financial information, verified across internal and industry sources. pic.twitter.com/Gu3bSXpD9z

we're partnering with @bcg @mckinsey @accenture and @capgemini to deploy openai frontier to enterprises globallyhttps://t.co/5dKA0LViti

— Brad Lightcap (@bradlightcap) February 23, 2026

This is another reason the liability layer matters. Foundation models can replicate output. They can't replicate the professional relationship, the E&O coverage, or the regulatory credentialing that clients are actually paying the premium for.

Growth Still Depends on People

These businesses still depend on people. AI raises the ceiling on what each person can handle, but it doesn't remove it. An AI-enabled audit firm can have a CPA manage significantly more engagements than before, but you still need the CPA. The margin expansion curve likely has diminishing returns - the big gains come early and flatten fast. If margins plateau at 55-65%, that's genuinely compelling in an $87B or $1T+ market. Nobody knows exactly where the curve flattens, and that uncertainty is the core risk.

What makes this even harder is that the talent these businesses depend on is scarce and getting more expensive. Counterintuitively, AI tools may actually make this worse. Take legal as an example. Vertical AI tools like Harvey and Legora are selling directly into incumbent firms, making elite practitioners more productive where they already are. A Big Law partner using AI to handle 3x the caseload with less grunt work is making more money, doing more interesting work, and has less reason to leave. The tools that were supposed to disrupt incumbents end up entrenching the talent inside them.

Meanwhile, multiple AI-native law firms are all trying to recruit those same partners away - and competing against each other to do it. If ten VC-backed AI-native firms all raise significant capital in the same category, you get talent cost inflation on the supply side as they bid for a finite pool of licensed professionals, and pricing pressure on the demand side as they compete for the same clients. The margin advantage that made the model attractive in the first place gets competed away - not just by incumbents, but also by other startups running the same playbook. It's the classic paradox of consensus trades: the more capital that chases the thesis, the harder the thesis is to execute.

So Which Business Models Are Best Positioned?

As an early-stage investor, the question I'm underwriting isn't "will this become a software business?" It's "can this founder build enough leverage to sustain gross margins north of 50% with recurring revenue at scale, in a market large enough that those margins generate venture-scale outcomes? And can they build defensibility through data moats, liability relationships, or workflow control that makes switching costly?” In a $1T+ legal market or a $650B accounting market, the answer can absolutely be yes. In a $3B niche, probably not.

Given the risks above, the CYA and liability framework becomes a useful lens. The companies most likely to endure are those that own both the liability and the output, not just the workflow layer in between (once again, shoutout to Nihar). But which model gets you there depends on the industry dynamics.

Selling AI tools to incumbent services firms is harder than it sounds. These businesses are often small, fragmented, and resistant to change. And if they're already printing cash, there's no urgency to adopt new tech. You need a forcing function. Accounting is the clearest example right now. 300,000+ accountants have left the field since 2020, 75% of CPAs are nearing retirement age, and firms are turning away work. When the alternative is lost revenue, the appetite for AI tools goes way up. That's why we backed Basis and InScope, both selling AI-powered tools to accounting professionals. While they don’t take the liability themselves (still lies with the services firm), given the forcing function, their customers allow them to embed into the workflow deeply enough that switching becomes operationally painful, which creates defensibility through workflow control rather than liability ownership.

Building AI-native services firms from scratch faces a different challenge. You're asking clients to trust a startup with work they've historically entrusted to firms with decades of brand equity, regulatory relationships, and professional credentialing. In services where the CYA dynamic is strong, that's an especially steep hill. That's why the AI-native path tends to work best in industries where you can sidestep the trust problem by owning the operation outright rather than selling services under an unproven brand. Insurance brokerage is a good example of where it works. As Elliot at DocShield (one of our portfolio companies at BTV) put it, insurance brokerages are self-evidently high-quality businesses with recurring customers and very low churn, so they're expensive even when subscale. A $2 million EBITDA brokerage can trade at 10x+ multiples, making a roll-up strategy capital-inefficient. Selling software into them is equally tough when the average midsize brokerage has about 0.5 IT employees and lives in a walled-garden agency management system. For DocShield, owning the brokerage and building the AI system end-to-end was the only path that made sense.

Roll-ups aren't a bad strategy - they're just a better fit for PE, where the fund structure, hold periods, and operational playbooks are designed for exactly this kind of asset transformation. VC timelines and capital structures make it much harder to execute. That said, there are cases where the dynamics make roll-ups the right approach. Meroka, another BTV portfolio company, operates in independent physician practices. The market context is stark. Private practice is in a structural crisis - they can't compete with scaled players, lack negotiating leverage, and have no succession plan as older doctors retire. Meroka offers an alternative path: transitioning practices to employee ownership via an ownership trust, ensuring permanent independence from PE, while layering in modern technology and AI as a management services organization. Software alone won't fix this. In fact, without intervention, AI will just accelerate consolidation and make the PE problem worse. You have to own the practice to transform it.

The Meroka model works because it addresses the full evaluation framework at once. The ownership trust creates the liability relationship and defensibility that keeps practices from churning to PE buyers. The management services layer generates recurring revenue that scales with each new practice. And the structural crisis in private practice, with aging doctors, no succession pipeline, and PE pressure on all sides, is the forcing function that makes adoption urgent rather than optional.

The model follows from the market, not the other way around.

Where I Net Out

I believe you can make a ton of money as a VC investing in tech-enabled services businesses. I'm also not under the illusion that those businesses will ever have the same margin profile as software, or that the services TAMs will stay static as AI reshapes how and why companies buy professional services. They will inherently have ceilings.

The mistake is dismissing these businesses because they're 'not software,' or investing in them while pretending they'll eventually get there. The right approach is to underwrite them for what they are: large-market, improving-margin, increasingly recurring, AI-leveraged services businesses.

And if you're an early-stage investor, in some ways, nothing has changed. At pre-seed and seed, you've always been underwriting margin trajectory, not current margins. You've always been betting that the businesses you back today will look fundamentally different in five years. The difference now is that AI gives these founders a structural tailwind to gross margin expansion that didn't exist in traditional services businesses. The models get better, the execution layer gets more automated, and the economics improve almost by default. That's a real advantage, even if it comes with a ceiling.

The framework that has made software so attractive to investors is straightforward: big markets, high margins, recurring revenue, low cost to scale. AI-enabled services businesses won't check every one of those boxes. They may never have 80% gross margins or scale without adding headcount. But in markets that are 20-70x larger than their software equivalents, with margins trending north of 50%, recurring client relationships, and defensibility rooted in owning the liability and the client relationship, they don't need to.

Services won't become software. But they will become more software-like. And in markets this big, that should be more than enough.